Image Details

Caption: Figure 1.

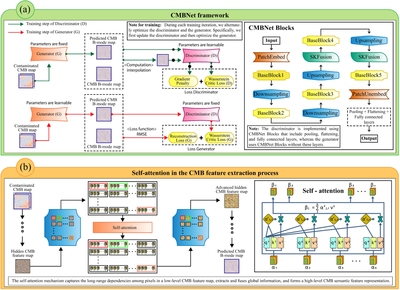

CMBNet and self-attention in the CMB feature extraction process. The left side of panel (a) shows the overall architecture of the CMBNet network and the training process. The CMBNet architecture consists of a discriminator and a generator. The entire training process follows the WGAN-GP framework, which involves two alternating steps: “updating the discriminator parameters” and “updating the generator parameters.” During the “updating the discriminator parameters” step, the generator’s parameters remain fixed. The contaminated CMB map is first input to the generator to produce the predicted CMB B-mode map. This predicted map, along with the true CMB B-mode map, is then input to the discriminator to compute the Wasserstein critic loss (D). Moreover, a gradient penalty is applied to the random linear interpolation results between the clean and predicted CMB B-mode maps. The total loss in the discriminator training step is defined as the weighted sum of the Wasserstein critic loss (D) and the gradient penalty. This total loss is backpropagated to update the discriminator’s parameters. During the “updating the generator parameters” step, the discriminator’s parameters remain fixed. The contaminated CMB map is first input to the generator to obtain the predicted CMB B-mode map, which is then input to the discriminator to compute the Wasserstein critic loss (G). Simultaneously, the RMSE between the predicted CMB B-mode map and the clean CMB B-mode map is calculated, which is referred to as the reconstruction loss. The total loss in the generator training step is defined as the weighted sum of the Wasserstein critic loss (G) and the reconstruction loss. This total loss is backpropagated to update the generator’s parameters. On the right side of panel (a), a schematic of the core network layer, CMB blocks, is presented. This module serves as the fundamental component for both the generator and the discriminator. Specifically, both the generator and the discriminator are composed of CMB blocks, but their output ends differ, and their parameters are entirely independent with no sharing. The specific differences in the output ends are as follows: The discriminator’s output end includes the pooling operation, flattening operation, and fully connected layers, as indicated by the dashed box, to produce a scalar score that measures the quality of the input map. The generator, on the other hand, does not contain the structures within the dashed box, and its output is a map of the same size as the input map, which is the predicted CMB B-mode map. The specific network layer parameters for both the discriminator and the generator are detailed in Appendix A. The left side of panel (b) illustrates how the network layers containing self-attention in the generator or discriminator utilize the self-attention mechanism to refine the low-level feature map (hidden CMB feature map), thereby obtaining a more expressive high-level feature map (advanced hidden CMB feature map). Specifically, the low-level feature map (hidden CMB feature map) is first converted into corresponding low-level feature tokens. These low-level feature tokens are then input into the self-attention layers to obtain high-level feature tokens. Finally, the high-level feature tokens are transformed back into the corresponding high-level feature map (advanced hidden CMB feature map). On the right side of panel (b), a schematic of the self-attention mechanism is presented. This schematic visualizes how the self-attention mechanism refines low-level feature tokens into high-level feature tokens. The detailed mathematical mechanism of self-attention is provided in Appendix B.

Copyright and Terms & Conditions

© 2026. The Author(s). Published by the American Astronomical Society.